Numerical Error in program

GOAL

To understand numerical errors in computers.

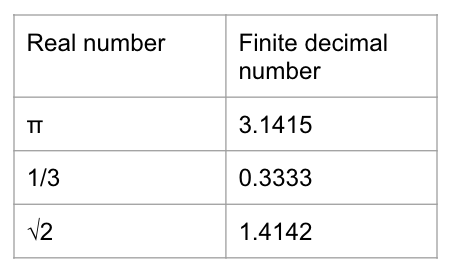

Error of input data

Input data error occurs when real number such as infinite decimal numbers are input as finite decimal number by users.

Approximation error

For example, square root are generated by approximate calculation such as iterative method and bisection method in computers.

Round-off error

Round-off error is caused because the calculation result is rounded to within the number of significant digits in computers.

1.105982 -> 1.10598

40156.245618 -> 40156.24562

Truncation error

Truncation error occurs when iterative calculation such as infinite series or iterative method aborted halfway because of time restriction.

Loss of significance

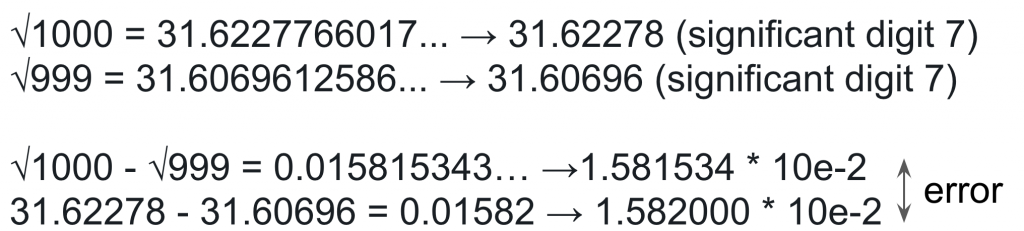

Loss of significance occurs in calculations of two close numbers using finite-precision arithmetic.

Example

1.222222- 1.222221 = 0.000001

(significant digits 7) – (significant digits 7) = (significant digits 1)

Why loss of significance is undesirable?

For example, calculate √1000 – √999 to 7 significant digit.

Information loss

Information loss occurs when add or subtract big number and small number.

Example

122.2222 + 0.001234567 = 122.2234 (significant digits 7)